I engineer autonomous systems and trading infrastructure.

Turning research code into production reality.

Not just endpoints—but distributed adaptive intelligence.

ayushv Focus Areas

I specialize in building cutting-edge systems as a quant dev and ai dev.

AI Dev & Systems

As an ai dev and ML engineer, I build production-grade model pipelines, from auto-tuned boosting ensembles to custom deep learning architectures.

Stack: PyTorch, TensorFlow, AutoGluon, XGBoost/LightGBM/CatBoost, and deployed on AWS SageMaker, GCP Vertex AI, or Azure ML.

Voice + Multimodal AI

Real-time assistants with STT/TTS, camera/vision models, and tool execution for ops and research teams.

Streaming transcription, neural voice libraries, guardrails, and narrated transcripts for compliance reviews (ai dev).

Quant Dev & Trading Infra

Signals, pair trading engines, and telemetry-rich pipelines tuned for low latency and auditability.

Python + FastAPI services, Redis/PG for state, Prometheus dashboards, and LangGraph risk orchestrators (quant dev).

Agentic Workflows

Custom orchestration that coordinates multiple providers, humans-in-loop, and SaaS tooling.

LangGraph > LangChain for deterministic control, governance hooks, evaluation harnesses, and playbooks by ayushv.

Full Stack Dev & Dashboards

Executive-ready analytics for revenue, risk, or ops wrapped in delightful product UX.

Next.js frontends, supersonic charts, embedded auth, and automated QA as a full stack dev.

Data & Platform Ops

Foundational data ingestion, observability, and compliance rails for lean teams.

FastAPI + Redis, PostgreSQL/pgvector, tracing, and deployment patterns that stay sane on lean infra.

OSINT & Security

Ethical hacking and open-source intelligence pipelines for threat detection and data gathering.

Automated recon scanners, public data correlation, and security audit harnesses for deployed AI agents.

Featured Projects

A selection of recent projects I've worked on.

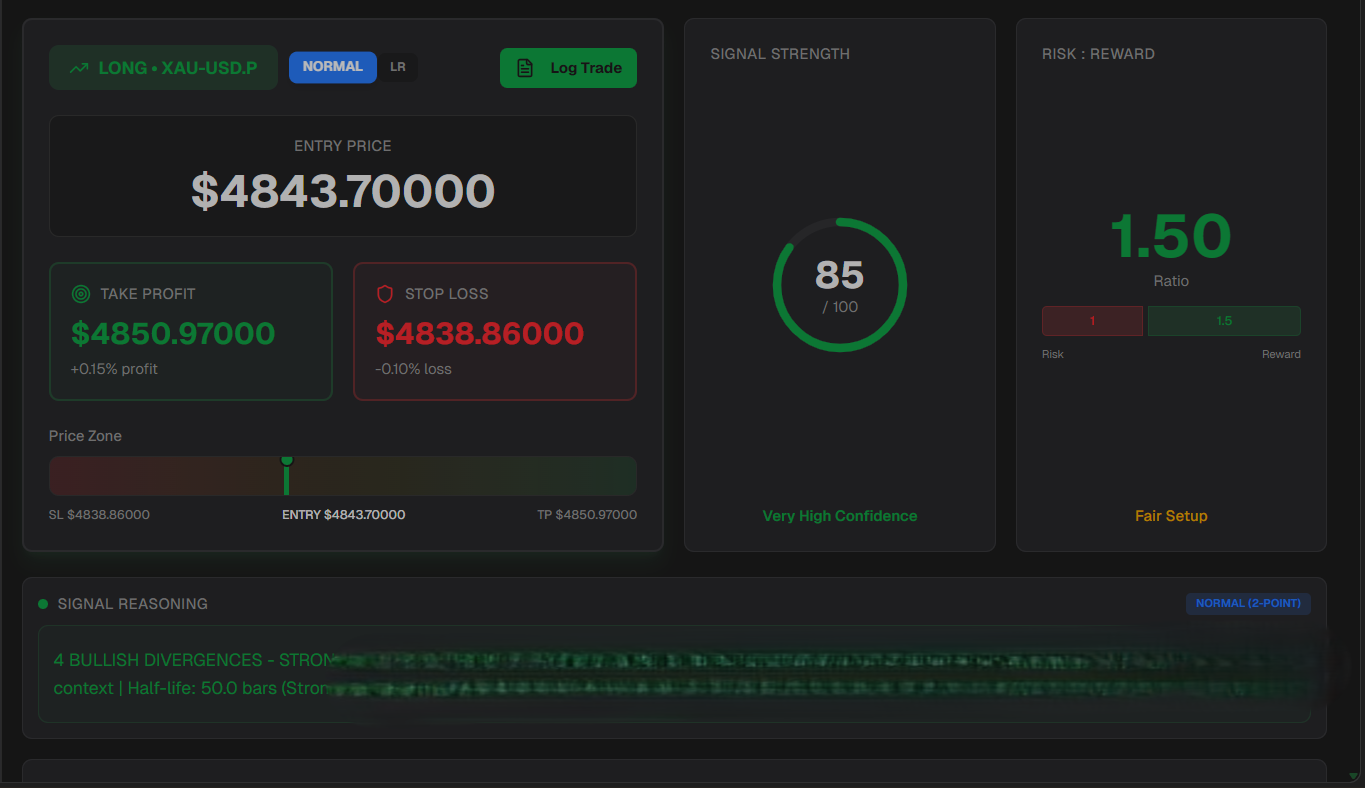

Divergence Detector (Advanced)

Event-driven market scanner processing 500+ ticks/s

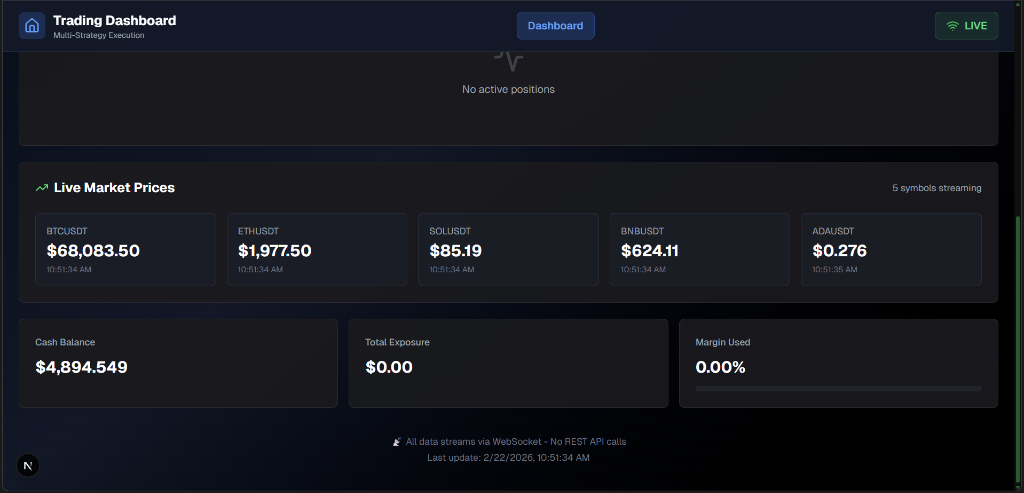

Regime-Switching Scalper

Production 24/7 multi-asset scalper with runtime market regime detection

Code Snippets

Small excerpts from real systems — the kind of details that make products reliable.

Stable content hashing + near-duplicate detection

A layered dedupe strategy: stable hash → pg_trgm similarity → pure-Python fallback.

1# normalize + stable hash (accounts for platform & angle)2def normalize_content(content: str) -> str:3 normalized = content.lower()4 normalized = re.sub(r"\s+", " ", normalized)5 normalized = re.sub(r"[^\w\s]", "", normalized)6 return normalized.strip()789def compute_content_hash(content: str, platform: str, angle: str) -> str:10 normalized = normalize_content(content)11 hash_input = f"{normalized}|{platform.lower()}|{angle.lower()}"12 return hashlib.sha256(hash_input.encode("utf-8")).hexdigest()131415# orchestrator: exact-hash → trigram → fallback16async def check_duplicate(session, campaign_id, content, platform, angle, threshold=0.7):17 content_hash = compute_content_hash(content, platform, angle)1819 exact = await check_exact_duplicate(session, campaign_id, content_hash)20 if exact:21 return {"is_duplicate": True, "type": "exact", "existing_post_id": exact.id}2223 near = await check_near_duplicate_trgm(session, campaign_id, content, threshold)24 if not near:25 near = await check_near_duplicate_fallback(session, campaign_id, content, threshold)2627 return {"is_duplicate": bool(near), "type": "near" if near else None, "content_hash": content_hash}

JSON-LD graph injector

Keeps schema composable while emitting one canonical @context (and @graph when needed).

1type JsonLdProps = {2 data: Record<string, unknown> | Array<Record<string, unknown>>;3};45export function JsonLd({ data }: JsonLdProps) {6 const payload = Array.isArray(data) ? data : [data];7 const graphNodes = payload.map((node) => {8 const { "@context": _ctx, ...rest } = node as Record<string, unknown>;9 return rest;10 });1112 const json =13 graphNodes.length === 114 ? { "@context": "https://schema.org", ...graphNodes[0] }15 : { "@context": "https://schema.org", "@graph": graphNodes };1617 return (18 <script19 type="application/ld+json"20 dangerouslySetInnerHTML={{ __html: JSON.stringify(json) }}21 />22 );23}

From ML pipelines to multi-tier automations.

I design and build systems that connect data, models, and workflows into practical tools — from quant research engines to multi-model AI platforms.

AI & Machine Learning

20 skillsModels, training, serving, and applied LLM work.

Backend & Data

16 skillsAPIs, data pipelines, and persistence.

Frontend & UI

8 skillsInterfaces, dashboards, and interaction design.

Infra & DevOps

11 skillsEnvironments, deployment, and reliability.

Automation & Agents

11 skillsMulti-tier workflows and automation.

Trading & Quant

6 skillsResearch systems and strategy tooling.

Systems & Architecture

5 skillsBlueprinting and high-level thinking.

General Engineering

5 skillsEveryday tools that keep things moving.

One Lead, Scalable Support

I operate as a principal engineer — architecting, coding, and shipping the core myself. For specialist needs, I bring in a trusted network. You get solo-founder agility with full-agency capability.

AI Engineering & Market Insights

Technical deep-dives for the 2026 AI and Fintech landscape.

How to build a production-grade RAG pipeline in 2026?

Building a production-ready Retrieval-Augmented Generation (RAG) system today requires more than just a vector database. It demands enterprise-grade orchestration with tool like LangGraph, multi-stage retrieval (re-ranking), and robust evaluation harnesses to ensure deterministic outputs and prevent hallucinations.

What is the best tech stack for real-time AI avatars?

Real-time AI avatars require a low-latency bridge between STT, LLM inference, and TTS. We use Next.js for the interface, FastAPI for the backend, and specialized providers like Simli or Deepgram for sub-second lip-sync and voice response times.

How to optimize statistical arbitrage strategies for crypto?

Optimizing cointegration-based pairs trading in crypto involves high-frequency market data pipelines, event-driven architecture, and financial anomaly detection using LSTMs. Telemetry-rich pipelines tuned for low latency are critical for maintaining alpha.

Why choose a full-stack AI engineer for your MVP?

A full-stack AI engineer provides the agility of a solo founder with the technical depth of an entire team. By handling everything from Dockerized AI environments to serverless backend orchestration, you ensure a cohesive architecture that scales from day one.

Frequently Asked Questions

Common questions about how I work and what to expect.

Let's Build Something Great

I'm always interested in working on exciting projects and collaborations.